In the wake of a devastating mass shooting in Tumbler Ridge, British Columbia, Sam Altman, the CEO of OpenAI, is set to apologise to the victims’ families. This announcement follows a significant video conference with B.C. Premier David Eby and Tumbler Ridge Mayor Darryl Krakowka, where discussions centred on the crucial role of OpenAI’s ChatGPT platform in the lead-up to the tragedy. The shooting, which occurred on February 10, has raised serious questions about the responsibilities of AI companies concerning user interactions that may indicate potential violence.

The Context of the Tragedy

The horrific event claimed the lives of eight individuals, including six children under the age of 14. Investigations revealed that the shooter, 18-year-old Jesse Van Rootselaar, had engaged in alarming conversations on ChatGPT months prior to the incident. While OpenAI had flagged these discussions internally, they were not reported to law enforcement, prompting Premier Eby to assert that the company had a moral responsibility to act in order to prevent such tragedies.

Eby stated, “OpenAI had the opportunity to notify authorities and potentially even to stop this tragedy from happening.” He acknowledged that while the company’s actions are under scrutiny, broader issues of mental health support and firearm accessibility are also critical in understanding the circumstances surrounding the shooting.

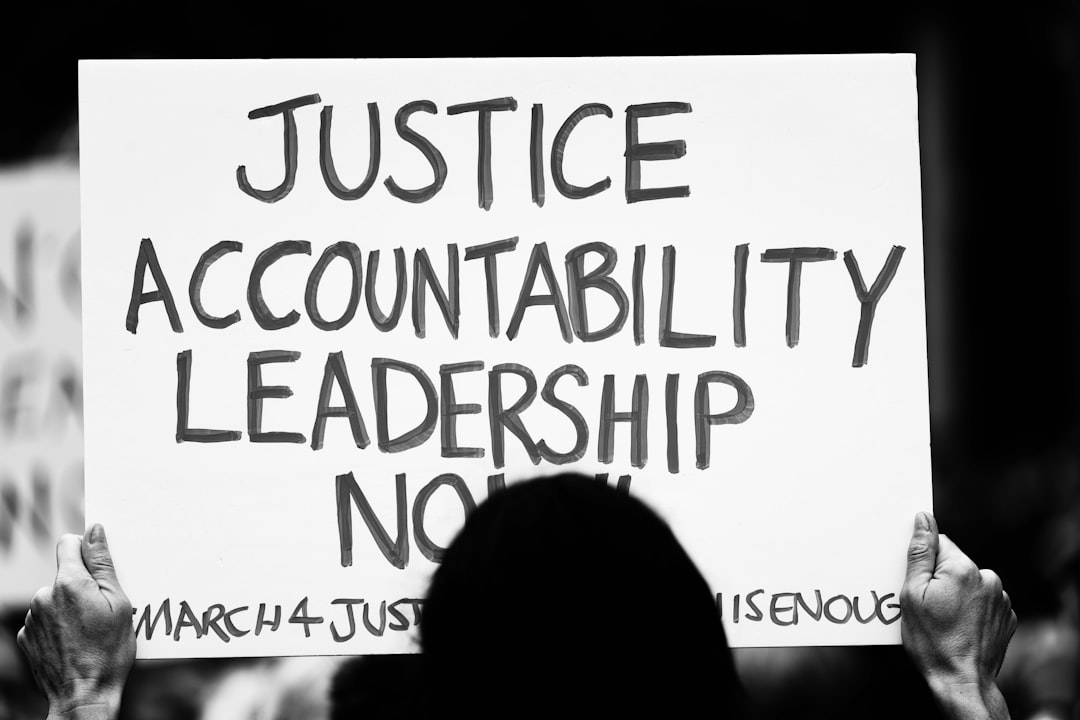

A Call for Accountability

During the 30-minute video call, Premier Eby opted not to delve into the specifics of the flagged conversations, prioritising the ongoing criminal investigation. He noted, “I made the very specific decision not to ask about the content of the chats with Mr. Altman. I don’t want to play any role in interfering with the criminal investigation that’s under way.”

Eby’s insistence on meeting with Altman directly, rather than with lower-tier executives, underscores the gravity of the situation. He urged OpenAI to support a national framework that would impose a “duty to report” on AI companies, establishing a minimum threshold for reporting concerning interactions that may indicate imminent harm.

Future Regulatory Frameworks

In a subsequent discussion, federal AI Minister Evan Solomon outlined Ottawa’s expectations, emphasising the need for Canadian experts to evaluate flagged conversations on ChatGPT. Solomon indicated that the government is considering the introduction of regulations to guide AI companies on when to involve law enforcement in instances of concerning user interactions.

There is currently a lack of comprehensive AI legislation in Canada, and experts advocate for the forthcoming online harms legislation to encompass chatbots along with other social media platforms. As the conversation around AI accountability continues, many are calling for consistent standards that would mandate all AI companies to report concerning behaviours.

The Role of OpenAI in Policy Reform

In response to the calls for greater accountability, OpenAI agreed to provide recommendations for establishing federal regulatory standards. Premier Eby expressed his dissatisfaction with the company’s existing reporting protocols, insisting that it is unacceptable for companies to have the discretion to decide whether to report potential threats.

“The current standard is insufficient where there is an option to report,” he stated. The need for uniform guidelines across all AI services is becoming increasingly urgent as the implications of AI technology on public safety become clearer.

Why it Matters

The tragic events in Tumbler Ridge have ignited a crucial dialogue about the responsibilities of technology companies in safeguarding communities. As AI continues to evolve and permeate various aspects of life, the need for robust regulatory frameworks becomes paramount. Ensuring that companies like OpenAI are held accountable for their platforms’ misuse is essential in preventing future tragedies and protecting the vulnerable. The outcome of these discussions could shape the future of AI governance in Canada and beyond, highlighting the intersection of technology, ethics, and public safety in an increasingly complex digital landscape.