The family of a 12-year-old girl critically injured in the tragic Tumbler Ridge school shooting has initiated legal action against OpenAI, claiming the company failed to alert authorities about the shooter’s violent intentions. The civil suit, filed on Monday in the British Columbia Supreme Court by Cia Edmonds on behalf of her daughters, Maya and Dahlia Gebala, seeks to uncover the truth surrounding the incident that shattered their lives.

Legal Action and Allegations

According to the civil claim, OpenAI had prior knowledge of concerning interactions between the shooter and its ChatGPT chatbot, which were flagged but not reported to law enforcement. The lawsuit contends that this negligence contributed to the horrific events of February 10, when an armed individual opened fire at Tumbler Ridge Secondary School, resulting in devastating injuries and loss of life.

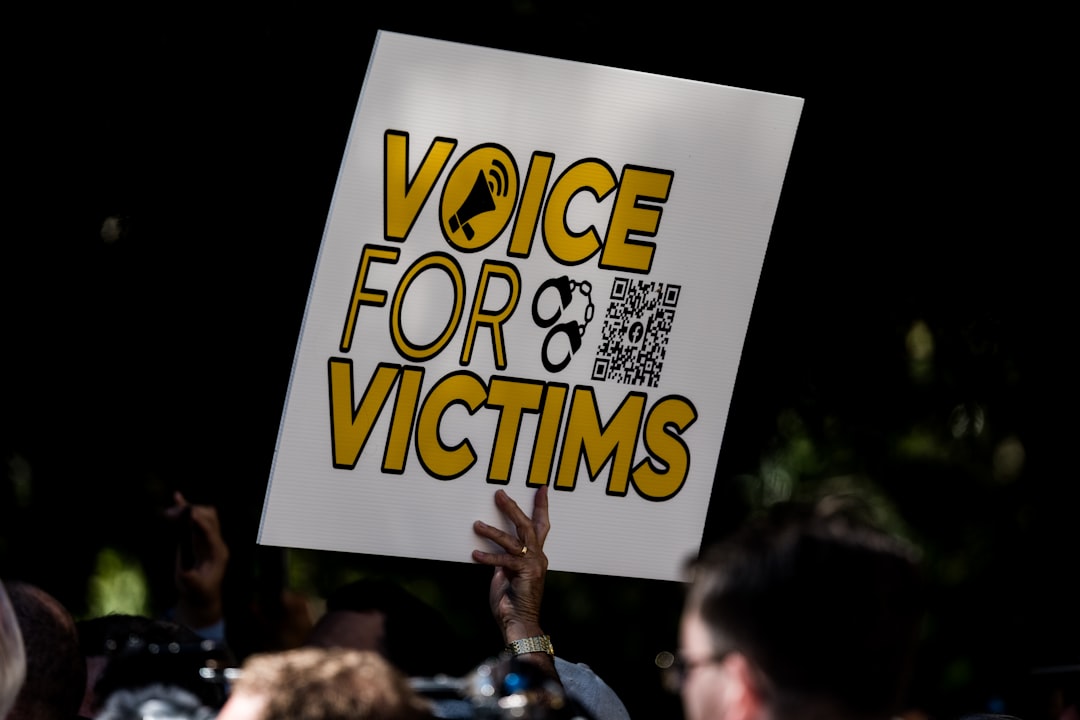

“The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada,” stated Rice Parsons Leoni & Elliott LLP, the law firm representing the family.

The Impact on the Victims

Maya suffered severe injuries during the shooting, being shot three times, with one bullet penetrating her skull. The civil claim details the catastrophic consequences she now faces, including a traumatic brain injury, permanent disabilities, and psychological trauma. Currently, she remains under care at BC Children’s Hospital, with her recovery prognosis uncertain.

Her sister, Dahlia, who was present during the incident but physically unharmed, is experiencing significant mental health challenges, including PTSD and anxiety. Their mother, Cia Edmonds, is also grappling with similar psychological issues, which have adversely affected her quality of life and financial stability.

OpenAI’s Response and Reforms

While OpenAI has not yet issued a public statement regarding the lawsuit, reports indicate the company is aware of the severity of the situation. Following the shooting, it was revealed that the shooter engaged with ChatGPT in June, discussing violent scenarios that were flagged by an internal review system. Despite some employees urging for action, the company did not notify Canadian authorities.

In light of the tragedy, OpenAI has announced plans to enhance its protocols to ensure that similar interactions would trigger an alert to law enforcement in the future. B.C. Premier David Eby has also mentioned that OpenAI CEO Sam Altman is prepared to apologise to the families affected by the shooting.

The Broader Context

The civil claim against OpenAI raises essential questions about the responsibilities of tech companies in preventing violence. Critics argue that the rapid rollout of AI technologies often overlooks necessary safety measures, potentially endangering lives. The plaintiffs are pursuing undisclosed punitive damages, asserting that OpenAI’s actions are not only legally indefensible but also morally unacceptable.

Why it Matters

This lawsuit signifies more than just a legal battle; it reflects a growing concern regarding the intersection of technology and public safety. As the prevalence of AI grows, so too does the urgency for responsible development and deployment practices. The outcome of this case could have far-reaching implications for how tech companies address potential risks associated with their products, potentially shaping future regulations and standards in the industry.