The family of a 12-year-old girl critically injured in the tragic Tumbler Ridge school shooting has initiated a civil lawsuit against OpenAI, the company behind the widely used ChatGPT chatbot. The claim, lodged on Monday in British Columbia Supreme Court by Cia Edmonds, seeks accountability for the purported failure of the tech giant to alert authorities about the shooter’s alarming behaviour prior to the incident that occurred on February 10.

Allegations of Negligence

The civil claim contends that OpenAI had prior knowledge of the shooter’s violent intentions, having flagged concerning interactions with its chatbot months before the shooting. According to reports, the shooter engaged in discussions about gun violence, which were recognised by an automated system within OpenAI. Despite this, the company did not inform law enforcement about the potential threat.

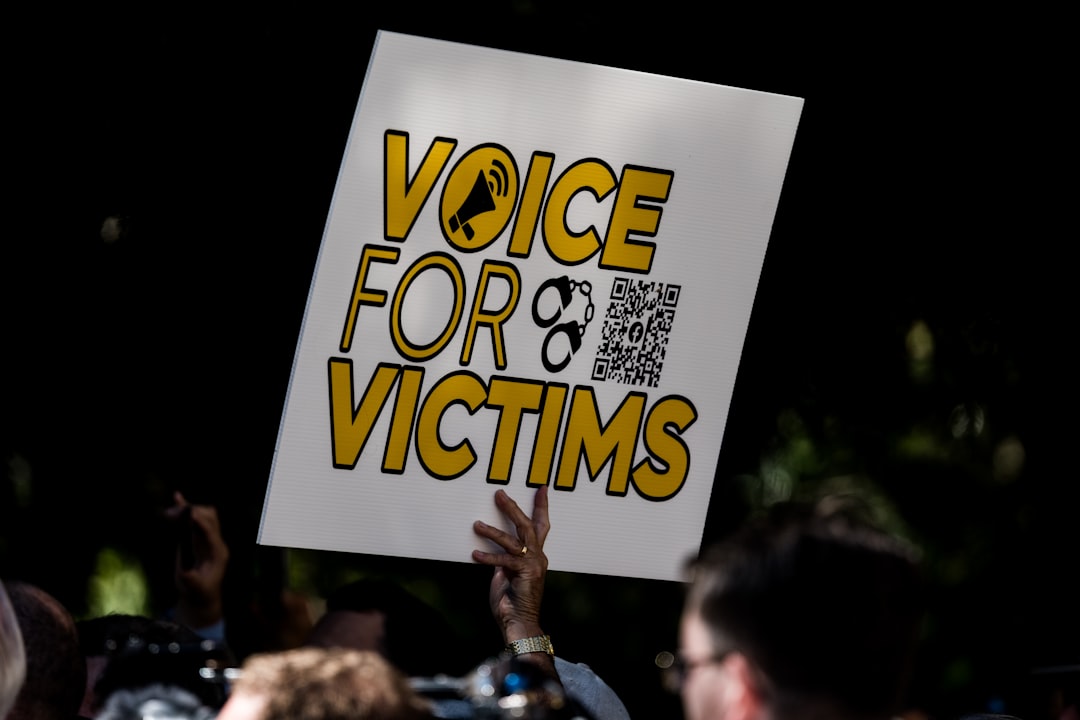

Cia Edmonds, representing her daughters, Maya and Dahlia Gebala, expressed the intent behind the lawsuit, stating, “The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada.”

The Impact on Victims

Maya, who sustained multiple gunshot wounds, including one that entered her skull and others that struck her neck and face, is currently receiving treatment at BC Children’s Hospital. The injuries have resulted in severe cognitive and physical disabilities, including right-sided hemiplegia, and the development of post-traumatic stress disorder (PTSD), among other afflictions.

Dahlia, though not physically harmed, has experienced significant psychological distress, suffering from PTSD, anxiety, and sleep disturbances. Their mother, Ms. Edmonds, has reported similar mental health impacts, experiencing pain, loss of enjoyment of life, and financial repercussions as a result of the trauma.

OpenAI’s Response and Changes

As of Monday, OpenAI had not provided a comment regarding the lawsuit. However, following the shooting, the company announced that it had implemented changes aimed at improving safety protocols. These adjustments are designed to ensure that any similar interactions would now be flagged to law enforcement.

In the aftermath of the incident, British Columbia Premier David Eby revealed that OpenAI CEO Sam Altman was prepared to issue an apology to the affected families. The lawsuit also criticises OpenAI for hastily releasing its large language model without conducting adequate safety evaluations, claiming that the technology contains “hazardous defects” that have now led to tragic consequences.

Seeking Justice and Accountability

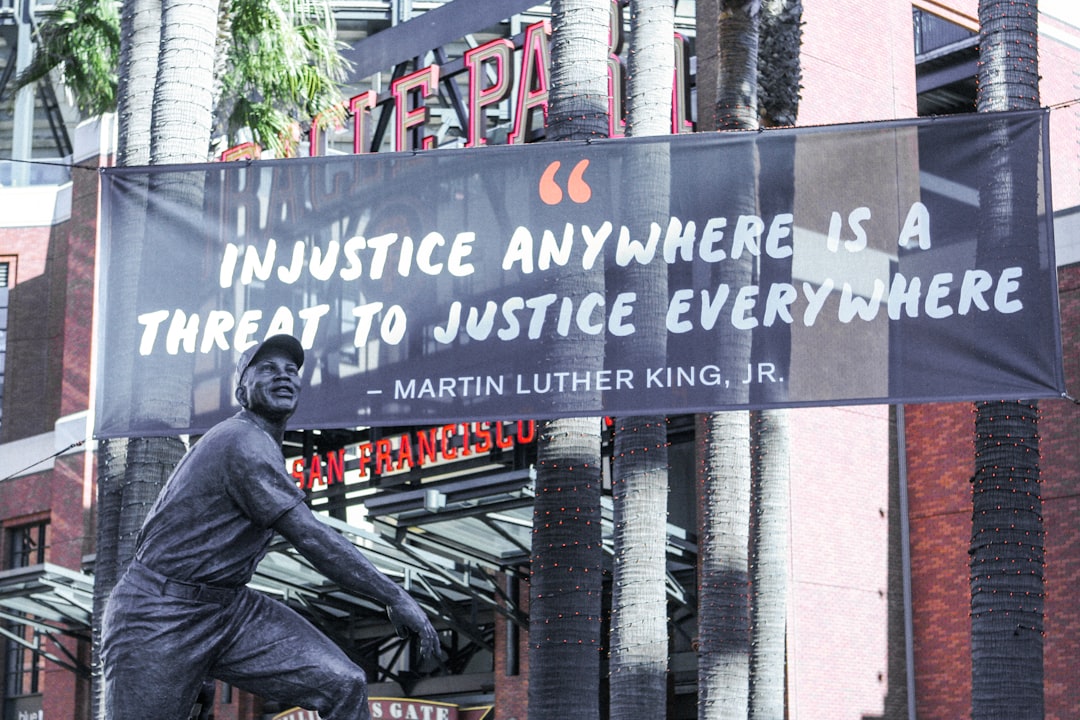

The plaintiffs are pursuing undisclosed punitive damages, labelling OpenAI’s actions as “reprehensible and morally repugnant.” While these allegations are yet to be tested in court, they underscore a growing concern over the responsibilities of tech companies in safeguarding public safety.

Why it Matters

This lawsuit not only seeks justice for the victims of the Tumbler Ridge shooting but also raises critical questions about the responsibilities of tech firms in monitoring and acting upon potentially dangerous user interactions. The outcome could set a significant legal precedent regarding the liabilities of technology companies and their moral obligations to the communities they serve. As society grapples with the implications of AI in everyday life, this case may shape future regulations and practices surrounding the deployment of such technologies, emphasizing the need for a balance between innovation and public safety.