**

In a groundbreaking response to a recent investigation, the mental health charity Mind has initiated an extensive inquiry into the intersection of artificial intelligence and mental health. This decision follows revelations from The Guardian, which highlighted the distribution of inaccurate and potentially harmful medical advice via Google’s AI-generated Overviews. Set to last a year, this inquiry aims to establish critical safeguards as AI increasingly shapes the landscape of mental health support.

The Context of Concern

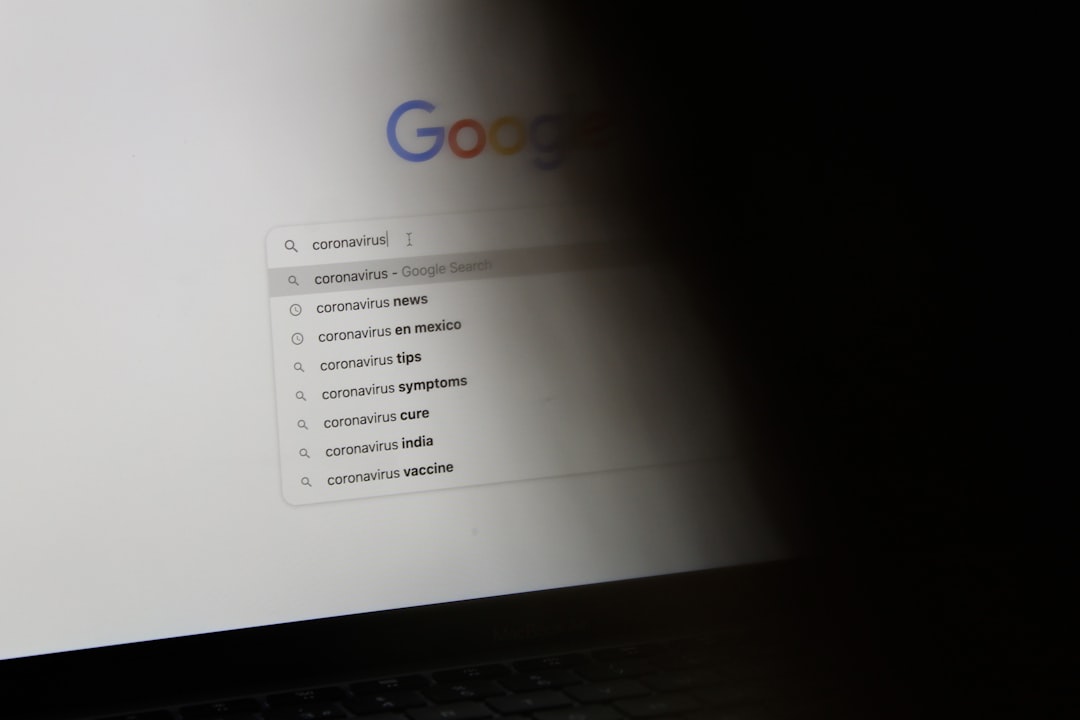

The inquiry comes in the wake of alarming findings that Google’s AI Overviews, which are viewed by over two billion users each month, have disseminated misleading health information. These summaries, prominently positioned above standard search results on the world’s most frequented website, have raised significant concerns among mental health professionals. Dr Sarah Hughes, CEO of Mind, emphasised the dangers posed by “dangerously incorrect” mental health guidance, which could exacerbate conditions for vulnerable individuals and deter them from seeking necessary treatment.

The AI Overviews are designed to provide concise answers to user queries, yet the investigation revealed that they frequently contain inaccuracies across various health topics, including mental disorders, cancer, and liver disease. Such misinformation not only misguides individuals but may also have life-threatening implications in severe cases.

A Call for Responsible Innovation

Dr Hughes articulated the potential of AI to revolutionise mental health care, asserting that the technology could enhance access to support and improve public services. However, she stressed that these benefits can only be realised if AI is developed with responsible guidelines that prioritise user safety. The inquiry will bring together a diverse group of stakeholders, including leading medical professionals, people with lived experience of mental health issues, policymakers, and technology developers, to collaboratively shape a safer digital health environment.

Mind’s initiative is particularly noteworthy as it represents the first comprehensive examination of AI’s influence on mental health globally. Hughes articulated the importance of ensuring that advancements in technology do not compromise individual wellbeing, stating, “We want to ensure that innovation does not come at the expense of people’s wellbeing.”

The Illusion of Clarity

Rosie Weatherley, Mind’s information content manager, highlighted a critical flaw in the AI Overviews: while they may offer brevity and straightforward language, they sacrifice depth and trustworthiness. Previously, users could often navigate to credible health websites that provided nuanced information, case studies, and a pathway to support. AI Overviews, however, strip away this richness, presenting an oversimplified summary that may mislead users into believing they have received definitive advice.

This shift underscores the need for robust regulatory frameworks that ensure the accuracy of health information disseminated through AI technologies. Weatherley’s insight into the previous landscape of mental health information on the internet serves as a reminder of the importance of credible sources and the potential dangers of overly simplified summaries that lack context.

Google’s Response and Ongoing Challenges

Despite the growing concerns, Google has defended its AI Overviews, asserting that they are generally reliable and that significant investments have been made to ensure accuracy, particularly in health-related topics. However, the tech giant has also acknowledged the limitations of its systems, stating that without specific examples of inaccuracies, it is difficult to address individual claims.

The controversy surrounding Google’s AI-generated health information raises broader questions about the responsibilities of tech companies in the realm of public health. As these platforms evolve, the imperative for transparency and accountability becomes more pressing.

Why it Matters

The inquiry launched by Mind is a crucial step towards addressing the potential risks associated with AI in mental health care. As technology continues to advance and integrate into everyday life, ensuring the accuracy and reliability of health information becomes paramount. The implications of misleading advice can be severe, affecting not just individual wellbeing but also public health at large. This initiative could pave the way for more stringent regulations and ethical standards in the rapidly evolving field of digital health, ultimately safeguarding vulnerable populations from the risks posed by unchecked technological advancements.