**

In a deeply concerning incident that has reverberated across Canada, the tragic mass shooting in Tumbler Ridge, British Columbia, has not only claimed eight lives but also raised profound questions about the responsibilities of artificial intelligence (AI) companies. The 18-year-old shooter, Jesse Van Rootselaar, reportedly engaged in discussions with the AI chatbot ChatGPT prior to the event, prompting scrutiny over the protocols governing AI interactions and their implications for public safety.

The Tumbler Ridge Shooting: A Disturbing Case

On February 10, 2025, Tumbler Ridge was thrust into the national spotlight following one of the most devastating mass shootings in Canadian history. In the aftermath, it was revealed that the shooter had communicated with OpenAI’s ChatGPT, although the specifics of those conversations remain undisclosed. OpenAI flagged the interactions internally but made the controversial decision not to alert law enforcement, raising critical questions about the thresholds for reporting potential threats.

Blair Attard-Frost, an assistant professor at the University of Alberta focusing on AI governance, expressed concern over the implications of this case. “AI companies in Canada have been given significant latitude to decide on their own safety standards,” he remarked, emphasizing the need for a more structured approach to monitor dangerous interactions.

The Ethical Dilemma of AI Engagement

The tragedy has spotlighted the intimate nature of human-chatbot relationships, particularly among vulnerable individuals. ChatGPT, with its 800 million users globally, has become a confidant for many, including teenagers who might share their innermost thoughts and feelings. However, this reliance on AI as a conversational partner raises alarming ethical questions. Are these companies equipped to handle disclosures of self-harm or violence? And what obligations do they have to protect against potential harm?

As discussions around the incident continue, B.C. Premier David Eby has called for clearer guidelines on when AI companies should inform authorities. Currently, there is no comprehensive AI legislation in Canada, leaving a regulatory void that complicates how AI firms handle sensitive information.

The Need for Legislative Action

Experts argue that the time for regulatory action is now. The absence of structured guidelines poses serious risks to public safety, especially when chatbots may inadvertently facilitate harmful behaviour. Evan Solomon, Canada’s federal AI Minister, has acknowledged the urgency of the situation. While previous statements indicated a reluctance to impose strict regulations, the Tumbler Ridge shooting has shifted the conversation towards the necessity of establishing clear safety protocols for AI companies.

Current discussions include the potential for Canada to adopt legislation similar to that of the European Union, which mandates developers to conduct safety assessments and mitigate risks associated with AI technologies. However, the path to such legislation is fraught with challenges. Striking a balance between privacy and safety is paramount; too stringent measures may lead to overreach, while too lax could result in preventable tragedies.

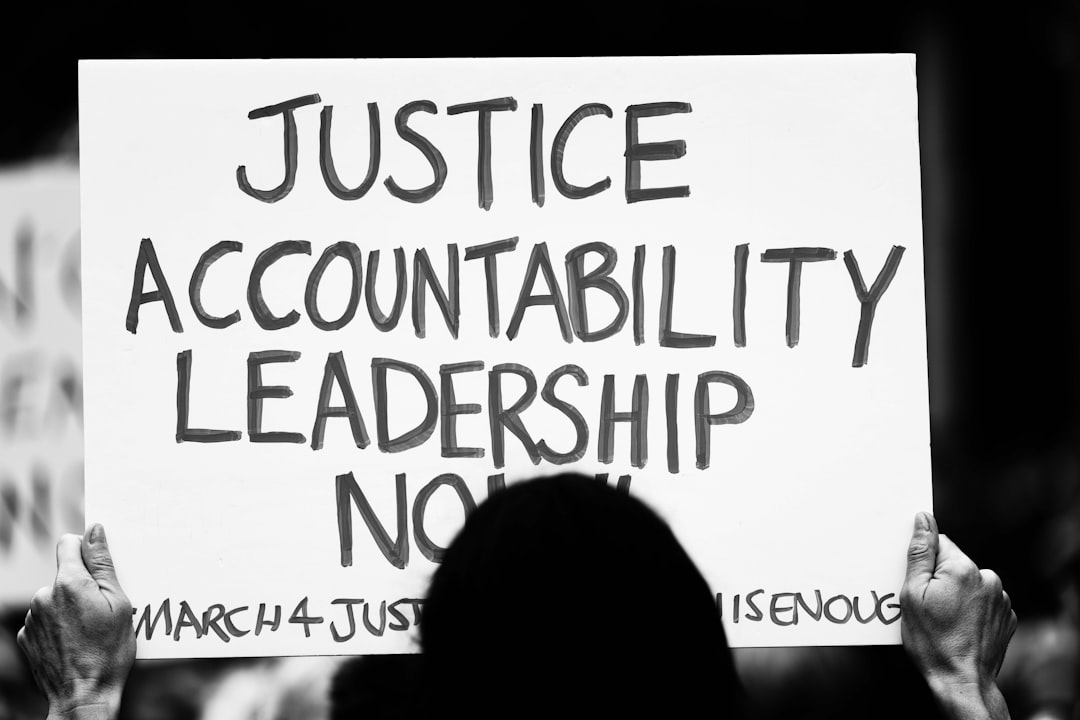

A Call for Transparency and Accountability

The unfolding discourse also highlights the need for transparency in AI company operations. OpenAI has stated it refers cases to authorities only when users present an imminent threat of serious harm. However, without a clear understanding of what constitutes that ‘imminent threat’, the criteria for intervention remain ambiguous.

Katrina Ingram, founder of Ethically Aligned AI, raised a poignant question: “Were these people equipped to make that kind of judgment call?” This underscores the broader concern that, in the absence of external oversight, AI companies may be ill-equipped to handle critical ethical decisions regarding user safety.

Why it Matters

The Tumbler Ridge shooting serves as a tragic reminder of the potential fallout from unregulated AI technology. As society increasingly integrates AI into everyday life, the implications for mental health, privacy, and public safety cannot be overstated. The urgency for a robust framework governing AI interactions is clear. Without meaningful legislation and transparent practices, the risk of future tragedies looms ever larger, compelling us to reflect on how we engage with these powerful technologies. The lives lost in Tumbler Ridge demand not just remembrance but action to ensure such a tragedy is never repeated.