In a significant legal move, the family of a 12-year-old girl severely injured in the Tumbler Ridge school shooting has initiated a civil lawsuit against OpenAI. The claim, filed on Monday in the British Columbia Supreme Court by Cia Edmonds, represents her and her two daughters, Maya and Dahlia Gebala. The lawsuit accuses the tech giant of negligence, alleging that it had prior knowledge of the shooter’s violent intentions but failed to notify authorities.

Allegations of Negligence

The civil claim draws upon various media reports and public statements to assert that OpenAI was aware of troubling interactions between the shooter and its ChatGPT AI months before the tragic incident on February 10. Despite identifying concerning patterns, OpenAI allegedly did not communicate these findings to law enforcement agencies.

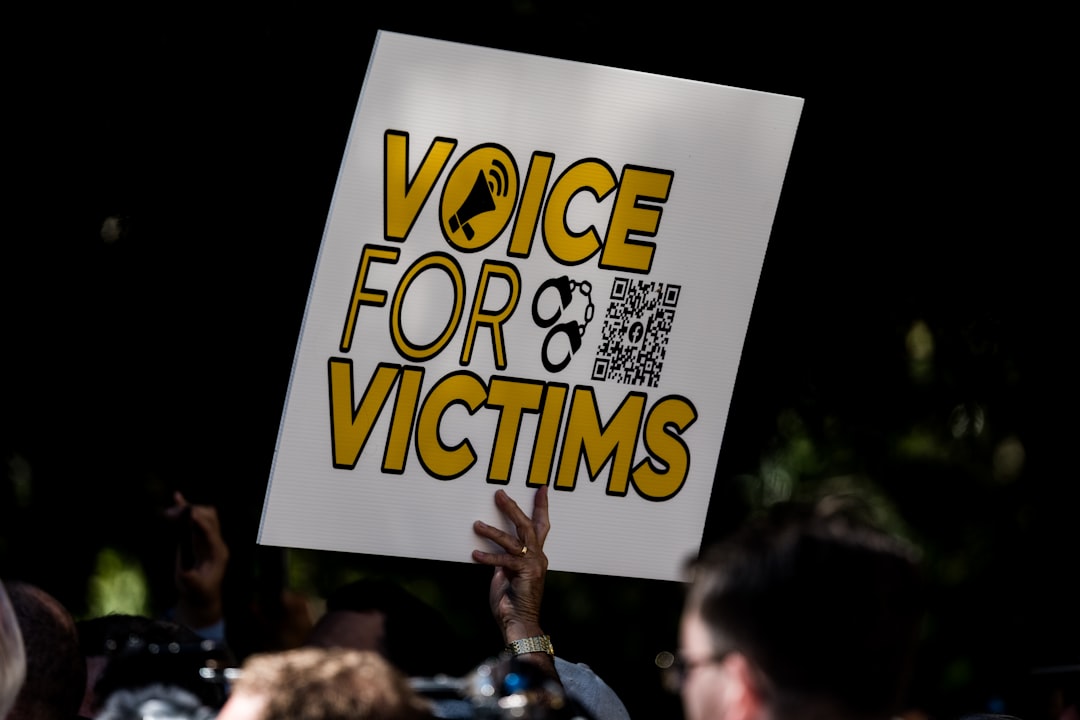

“The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada,” stated Rice Parsons Leoni & Elliott LLP, the law firm representing the family.

The Impact on Victims

Maya Gebala suffered life-altering injuries during the attack, being shot three times at close range. The injuries included a bullet that penetrated her head above her left eye, another that struck her neck, and a third grazing her cheek and earlobe. As a result, she faces a traumatic brain injury, permanent cognitive and physical disabilities, right-sided hemiplegia, and psychological conditions including depression, anxiety, and post-traumatic stress disorder (PTSD). Currently, Maya remains under medical care at BC Children’s Hospital, with her long-term recovery uncertain.

In contrast, her sister Dahlia, though physically unharmed, has been left grappling with PTSD, anxiety, and sleep disturbances. Their mother, Cia, has also reported similar psychological effects, impacting her quality of life and resulting in economic losses.

OpenAI’s Response and Changes

OpenAI has not yet commented on the lawsuit. However, reports indicate that the company is making strides to improve its protocols. Following the incident, OpenAI has claimed to have implemented measures that would ensure any similar interactions are flagged for law enforcement in the future.

B.C. Premier David Eby has revealed that OpenAI CEO Sam Altman is prepared to issue an apology to the affected families. The lawsuit contends that OpenAI rushed its product to market without sufficient safety evaluations, asserting that the company was aware of “hazardous defects” within its technology.

Seeking Accountability

The civil claim is also a call for accountability, with the plaintiffs seeking undisclosed punitive damages. They argue that OpenAI’s actions were not only reckless but also morally reprehensible, profoundly impacting the victims and the broader community. The allegations put forth in the lawsuit remain untested in court, and the outcome could have profound implications for the tech industry’s responsibilities regarding AI technologies.

Why it Matters

This lawsuit highlights the urgent and often overlooked issue of tech accountability in the context of public safety. As AI technologies become increasingly integrated into everyday life, the responsibility of companies like OpenAI to ensure their tools do not contribute to violence or harm is more critical than ever. The outcome of this case may set a precedent for how tech firms are held accountable for the actions of their users, and it raises important questions about the ethical implications of deploying advanced AI systems without adequate safeguards.