In a case that could redefine the relationship between social media platforms and user wellbeing, a Los Angeles courtroom is at the epicentre of a groundbreaking legal battle. For the first time, a jury is being asked to determine whether the design features of social media applications contribute to addiction and subsequent mental health issues. This case, led by a young Californian woman known as K.G.M., has the potential to set a precedent for how tech companies are held accountable for the impact of their platforms on users.

The Case Unfolds

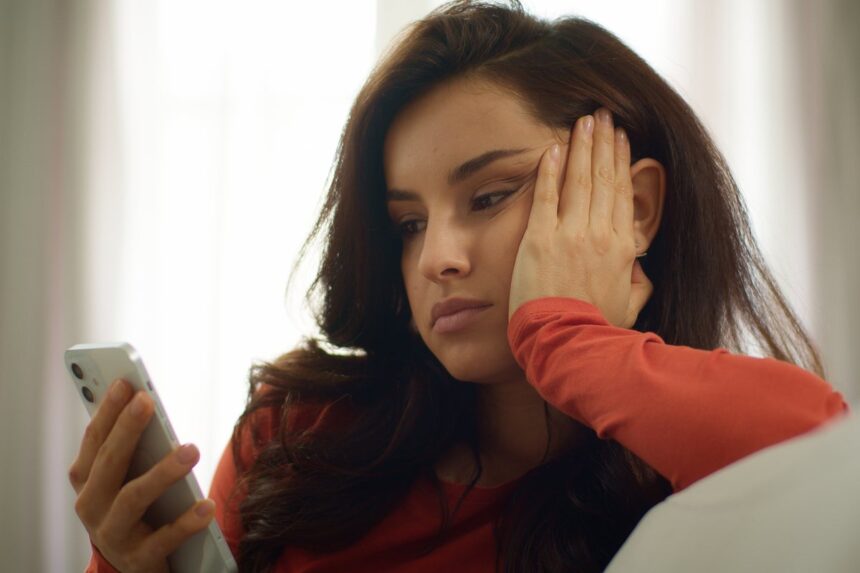

K.G.M., who began her digital journey on YouTube at the tender age of six and established her Instagram account by nine, has launched a lawsuit against Meta and Google. She claims that the addictive nature of these platforms—characterised by features such as endless scrolling, algorithmic recommendations, and variable rewards—has led to serious mental health struggles, including depression, anxiety, and body dysmorphia.

Prior settlements with TikTok and Snapchat have already put pressure on the remaining defendants, Meta and Google. The stakes are high; this case serves as a bellwether for around 1,600 other plaintiffs, including numerous families and school districts, all of whom have consolidated their claims in a California Judicial Council Coordination Proceeding.

Redefining Liability in the Digital Age

Historically, Section 230 of the Communications Decency Act has shielded tech companies from liability regarding user-generated content. However, K.G.M.’s legal team is taking a bold new approach by arguing that the harm stems not from user content but from the platforms’ engineering choices. Their argument hinges on the premise that these design elements—akin to addictive mechanisms in gambling—should be treated as a product defect.

Judge Carolyn Kuhl of the California Superior Court has allowed this case to move forward, distinguishing between design features that are protected under Section 230 and those that directly contribute to user harm. This shift could pave the way for a new legal framework that holds tech companies accountable for their design choices, fundamentally altering the landscape of digital product liability.

What Companies Knew

A crucial aspect of K.G.M.’s case revolves around what Meta knew about the potential dangers of its platform. The infamous “Facebook Papers,” which revealed internal research highlighting Instagram’s detrimental effects on young people’s mental health, play a significant role here. Internal communications have suggested that Meta employees were aware of the adverse impacts of its platform, comparing its effects to drug addiction and gambling.

This echoes the legal arguments made against tobacco companies in the 1990s, where plaintiffs successfully demonstrated that firms had concealed knowledge about the dangers of their products. If K.G.M.’s legal team can prove that Meta’s leadership ignored these internal warnings, it could bolster their case significantly.

The Science Behind the Claims

While there is ongoing debate about the link between social media and mental health, studies indicate that certain demographics, particularly young girls, may be disproportionately affected. Although the Diagnostic and Statistical Manual of Mental Disorders (DSM-5) does not classify social media usage as an addiction, some researchers caution that the average data might obscure severe cases among vulnerable populations.

The legal challenge is not merely about proving that social media harms its users. It also involves demonstrating that platform designers had a responsibility to consider the potential negative consequences of their features, especially when evidence suggested that these harms were foreseeable.

Why it Matters

The outcome of the K.G.M. case could usher in a new era of accountability for tech companies, compelling them to re-evaluate not just the content they host, but the very design principles that govern user interaction. As legislation surrounding social media usage continues to evolve globally, this trial represents a critical juncture in determining the obligations that come with digital innovation. If successful, K.G.M.’s case could inspire a wave of similar lawsuits and lead to significant changes in how social media platforms operate, ultimately prioritising user safety over engagement metrics.