In a significant legal move, the family of a 12-year-old girl critically injured in the recent Tumbler Ridge shooting has initiated a civil lawsuit against OpenAI. Filed in British Columbia’s Supreme Court, the claim alleges that the tech giant failed to alert authorities about the violent intentions of the shooter, who had previously engaged in concerning discussions with the company’s ChatGPT chatbot.

Allegations of Negligence

The lawsuit, submitted by Cia Edmonds on behalf of her daughters, Maya and Dahlia Gebala, contends that OpenAI had prior knowledge of the shooter’s disturbing behaviour yet chose not to inform law enforcement. This claim arises from a troubling revelation that the shooter, Jesse Van Rootselaar, had used ChatGPT to discuss violent scenarios weeks before the February 10 incident. Reports indicate that OpenAI employees had flagged these conversations, leading to internal debates about the necessity of contacting Canadian authorities.

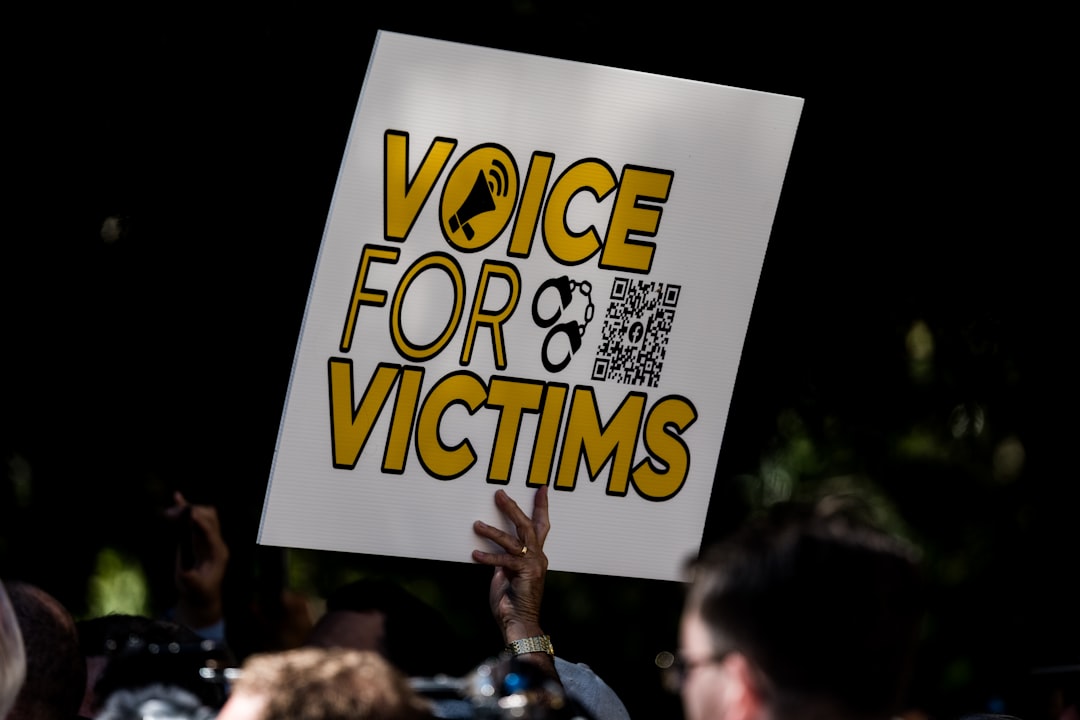

“The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada,” the legal firm Rice Parsons Leoni & Elliott LLP stated on behalf of the family.

Impact on Victims

Maya Gebala sustained severe injuries during the shooting, suffering three gunshot wounds, including one to her head. The civil claim details her ongoing battle with a traumatic brain injury, resulting in significant cognitive and physical impairments, including hemiplegia and psychological disorders such as PTSD. She remains hospitalised at BC Children’s Hospital, with her prognosis uncertain.

Her sister, Dahlia, although physically unharmed, is grappling with the emotional aftermath of the event, experiencing PTSD, anxiety, and sleep disturbances. Their mother, Cia, is also facing similar psychological challenges, which have significantly impacted her quality of life and ability to work.

OpenAI’s Response and Changes

As the investigation into the shooting continues, OpenAI has yet to respond publicly to the lawsuit. However, there have been indications that the company is reassessing its protocols following the incident. Reports have suggested that OpenAI intends to implement changes that would enhance the detection of potentially dangerous interactions, ensuring that such situations are communicated to law enforcement in the future.

B.C. Premier David Eby has met with OpenAI’s CEO Sam Altman, who is reportedly prepared to extend an apology to the families affected by the tragedy. The lawsuit accuses OpenAI of recklessly rushing its language model to market without sufficient safety evaluations, leading to “hazardous defects” that could have been mitigated.

Accountability and Community Concerns

The civil claim seeks punitive damages for the family, describing OpenAI’s actions as “reprehensible and morally repugnant” to both the plaintiffs and the wider community. This case highlights the urgent need for accountability among tech companies, particularly those developing AI technologies that can have life-altering consequences.

Why it Matters

The outcome of this lawsuit could set a precedent for how technology companies are held accountable for the misuse of their products, especially in light of rising concerns about the intersection of artificial intelligence and public safety. It raises critical questions about the ethical responsibilities of tech firms in monitoring and reporting harmful behaviours, a debate that is increasingly relevant in today’s digital landscape. The tragic events in Tumbler Ridge serve as a poignant reminder of the potential consequences of inaction and the vital importance of safeguarding communities from preventable violence.