In the wake of a devastating school shooting in Tumbler Ridge, British Columbia, which claimed the lives of eight individuals, including six children, Sam Altman, CEO of OpenAI, is set to issue an apology to the victims’ families. This announcement comes after a significant conversation between Altman, Premier David Eby, and Mayor Darryl Krakowka, where the implications of the incident and the role of AI technology were discussed.

Context of the Tragedy

On February 10, a horrific shooting unfolded at a local school, leaving the community in mourning. The tragedy was compounded by revelations that the shooter, 18-year-old Jesse Van Rootselaar, had engaged in concerning dialogues on OpenAI’s ChatGPT platform months prior to the incident. Although these communications raised alarms within the company, they were not flagged to law enforcement.

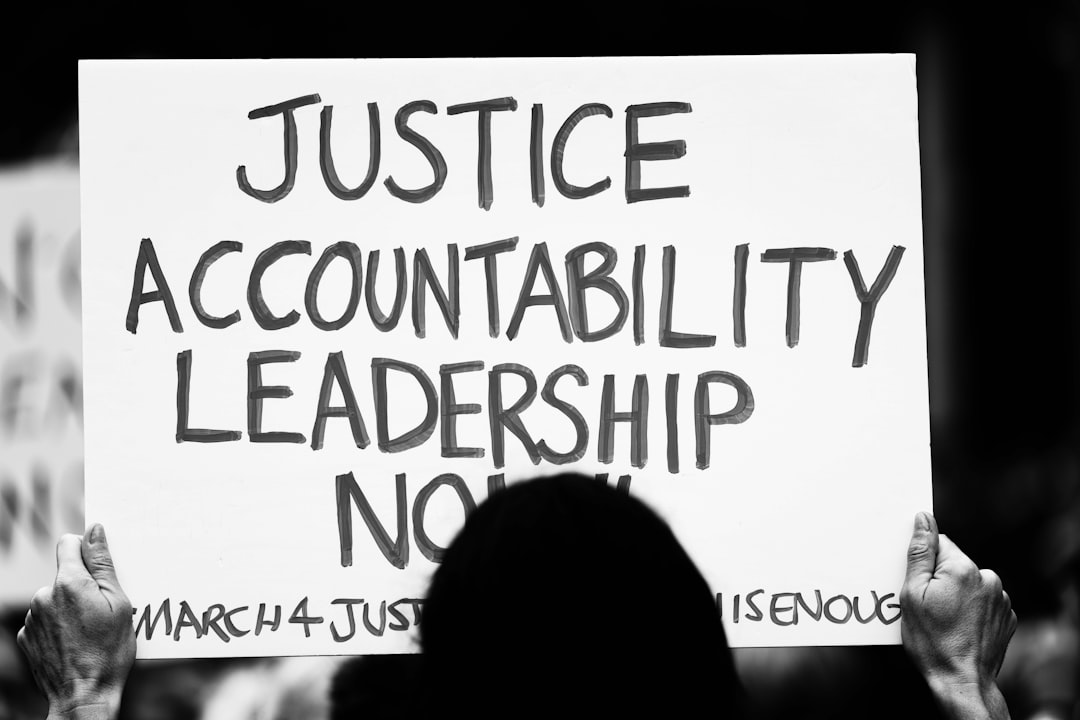

After their video call on Thursday, Premier Eby expressed his discontent, stating that OpenAI had a responsibility to alert authorities. “OpenAI had the opportunity to notify authorities and potentially even to stop this tragedy from happening,” he remarked, highlighting the urgent need for enhanced reporting protocols within AI companies.

The Call for Accountability

During the meeting, Premier Eby refrained from probing into the specifics of the conversations that occurred on ChatGPT, opting instead to respect the ongoing criminal investigation. He noted, “I made the very specific decision not to ask about the content… I want the police to release information as they feel that it’s appropriate.”

However, he did demand that OpenAI take a proactive stance on regulatory standards at the federal level. Eby’s insistence on direct dialogue with Altman, rather than lower-tier executives, underscores the gravity of the situation and the community’s need for accountability from tech giants.

A Push for Regulatory Change

In light of this tragic event, Eby has called for a national duty to report that would set a minimum threshold for AI companies when it comes to alarming content. He indicated that OpenAI has agreed to consider recommendations for regulatory measures, stating, “I don’t believe that OpenAI’s current standard is sufficient where there is an option to report.”

The conversation has sparked wider discussions about the interaction between AI firms and law enforcement. Federal AI Minister Evan Solomon met with Altman to communicate Ottawa’s demands, emphasising the necessity for Canadian experts to assess flagged conversations that may indicate a risk of harm.

Legislative Gaps and Future Implications

As it stands, Canada lacks comprehensive AI legislation and specific rules governing chatbots, unlike several other jurisdictions. Experts have suggested that forthcoming legislation addressing online harms should extend to AI technologies, ensuring that incidents like the one in Tumbler Ridge do not happen again.

OpenAI has stated that they have since revised their policies to enhance the identification of potential threats. However, the question remains whether these changes will be sufficient to prevent future tragedies.

Why it Matters

The Tumbler Ridge shooting serves as a stark reminder of the urgent need for robust regulations surrounding AI technologies. As communities grapple with the aftermath of such violence, the role of companies like OpenAI in safeguarding public safety must be scrutinised. Establishing clear reporting protocols could be vital in preventing future incidents, ensuring that technology serves to protect rather than endanger lives. The impact of this tragedy extends beyond Tumbler Ridge, highlighting a critical juncture in the relationship between technology, mental health, and public safety that requires immediate attention and action.