In a significant shake-up at OpenAI, a key figure in the robotics division has stepped down, citing concerns regarding the ethical frameworks surrounding the organisation’s recent collaboration with the Pentagon. This resignation raises crucial questions about the implications of military partnerships in the rapidly evolving field of artificial intelligence.

Concerns Over Ethical Guidelines

The departing executive, who played a pivotal role in shaping OpenAI’s robotics initiatives, expressed unease about the lack of clear ethical boundaries concerning the use of AI technologies in military applications. In a statement, they noted that the safeguards intended to govern AI usage were inadequately established prior to the announcement of the agreement with the Department of Defense. This decision has sparked a broader discourse on the ethical responsibilities that tech companies bear when entering into partnerships with military entities.

The resignation underscores the tension between technological advancement and ethical considerations. As AI becomes increasingly integrated into various sectors, including defence, the necessity for robust ethical guidelines becomes ever more pressing. The former executive’s departure signals a potential rift within OpenAI, reflecting concerns that the organisation may be prioritising commercial collaborations over its foundational commitment to responsible AI development.

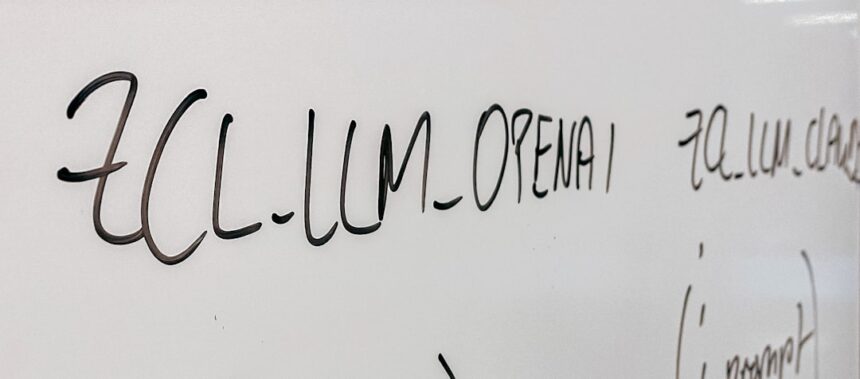

OpenAI’s Strategic Moves

OpenAI has been at the forefront of AI research, pushing the boundaries of what is possible with machine learning and robotics. However, the announcement of its collaboration with the Pentagon has drawn a mixed reaction from the tech community and the public alike. While some view it as a necessary step in advancing national security capabilities, others fear it may lead to the militarisation of AI technologies.

The agreement is said to involve the development of AI tools that could enhance military operations, prompting questions about the potential consequences of such technologies in combat scenarios. Critics argue that without stringent oversight, the use of AI in warfare could lead to unpredictable outcomes, including an escalation in conflict or the deployment of autonomous weapons systems without adequate human control.

The Broader Implications

This development is not an isolated incident; it reflects a growing trend where leading tech firms are increasingly engaging with government and military sectors. As these partnerships expand, the dialogue surrounding the ethical implications of AI in warfare and surveillance becomes more critical. The resignation of OpenAI’s robotics leader serves as a cautionary tale about the need for transparency and accountability in AI development, especially when it intersects with military interests.

Moreover, this situation may prompt other tech companies to reassess their engagements with the military. The public’s trust in AI technologies hinges on the belief that these tools are being developed with a strong ethical framework, ensuring that they are used to enhance human life rather than to jeopardise it.

Why it Matters

The departure of a senior executive from OpenAI highlights a significant ethical dilemma surrounding the use of AI in military applications. As society grapples with the implications of technology in warfare, this incident serves as a stark reminder of the responsibility that tech leaders hold. It underscores the necessity for clear ethical guidelines and transparency in AI development, particularly when military partnerships are involved. The future of AI relies not only on technological innovation but also on a commitment to ensuring that such advancements serve humanity positively and ethically.