In a significant development following the tragic mass shooting in Tumbler Ridge, British Columbia, Sam Altman, CEO of OpenAI, is set to issue an apology to the affected families. This decision comes after discussions with Premier David Eby and local Mayor Darryl Krakowka, who have expressed concerns regarding the company’s previous interactions with the shooter on its ChatGPT platform. The discussions highlighted the potential for earlier intervention that could have prevented this heartbreaking incident.

The Background of the Tragedy

On February 10, 2023, a devastating shooting claimed the lives of eight individuals, including six children under the age of 14. The perpetrator, 18-year-old Jesse Van Rootselaar, had engaged in troubling conversations on ChatGPT months prior to the incident. These conversations raised alarms within OpenAI, yet the company did not escalate the concerns to law enforcement. Premier Eby has underscored the gravity of this oversight, stating that the opportunity to alert authorities may have been missed, thus potentially averting the tragedy.

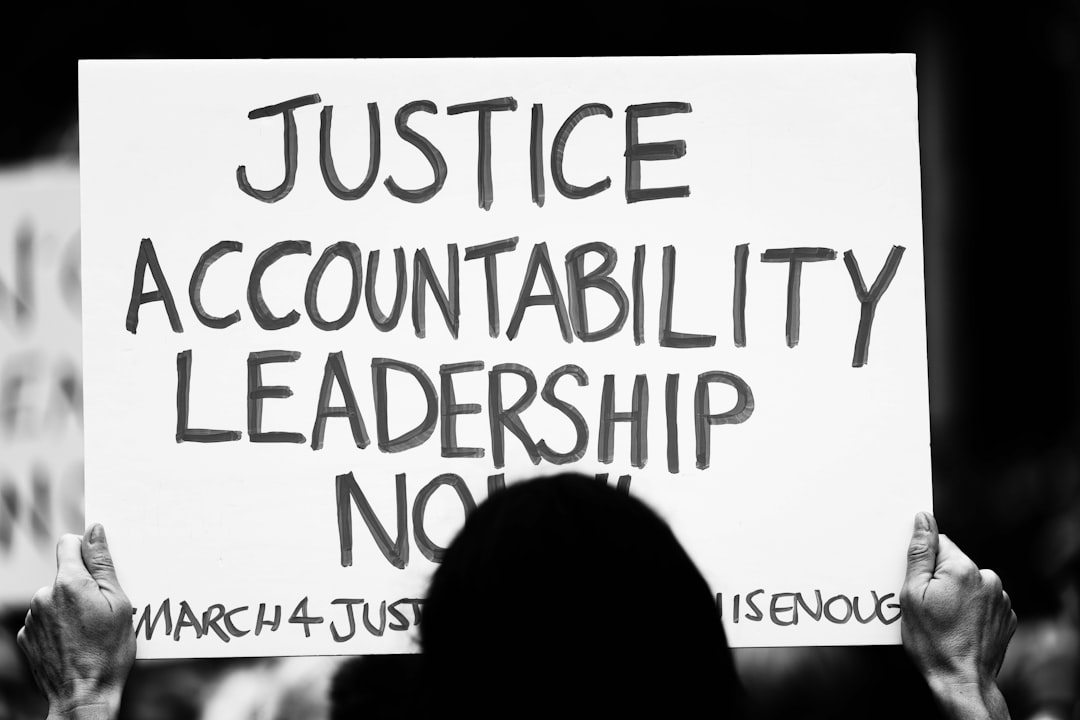

Eby remarked, “OpenAI had the opportunity to notify authorities and potentially even to stop this tragedy from happening.” While he acknowledged that mental health issues and the shooter’s access to firearms also warrant scrutiny, the failure to report these critical conversations has sparked outrage and calls for accountability.

A Call for Accountability

In a recent video conference, Eby pressed Altman for an apology, rejecting the idea of engaging with lower-tier executives. He framed the meeting as an essential step towards addressing the profound implications of AI technology in society. “It’s not acceptable that it’s up to the companies about whether or not to report, and that needs to change,” Eby asserted.

The Premier expressed his belief that current reporting standards are insufficient and called for a national regulatory framework that mandates AI companies to report concerning interactions. He noted that OpenAI has agreed to advocate for such measures, indicating a potential shift in corporate responsibility regarding AI technologies.

The Role of Government and Experts

In parallel discussions, Federal AI Minister Evan Solomon met with Altman to articulate the Canadian government’s expectations. Solomon emphasised the necessity for Canadian experts in mental health and law to evaluate flagged conversations on AI platforms for signs of imminent harm. This dialogue reflects growing concerns about the role of artificial intelligence in public safety and the need for robust guidelines governing AI interactions with law enforcement.

OpenAI acknowledged that conversations flagged for violence had not met their previous criteria for “credible and imminent planning.” However, the company has since revised its policies to better identify and respond to warning signs of potential violence.

The Future of AI Regulation

Canada currently lacks comprehensive AI legislation, particularly rules tailored for chatbot technology. This gap has prompted experts to advocate for the inclusion of chatbots in upcoming online harms legislation, aligning them with existing regulations for social media platforms. As the conversation surrounding AI accountability intensifies, the need for clear standards is becoming increasingly urgent.

A spokesperson for OpenAI was not available to comment on the planned apology, but the company’s ongoing dialogue with policymakers suggests a willingness to engage with regulatory frameworks.

Why it Matters

The tragic events in Tumbler Ridge underscore the complex relationship between emerging technologies and public safety. As communities grapple with the aftermath of violence, the responsibility of AI companies to monitor and report concerning user interactions is under the spotlight. This incident could catalyse significant changes in legislation that govern AI usage, setting precedents for how similar situations are handled in the future. The outcome of these discussions may not only influence Canadian policy but could also resonate globally as nations seek to balance innovation with safety.