In an escalating standoff that has captured the attention of the tech world, the US Department of Defense (DoD) and Anthropic—an AI startup led by Dario Amodei—are embroiled in a heated debate over the ethical use of artificial intelligence in military operations. The crux of the dispute centres around Anthropic’s firm stance against allowing its AI, known as Claude, to be utilised for domestic surveillance or lethal autonomous weaponry. As this saga unfolds, it raises critical questions about the intersection of technology, ethics, and national security.

Anthropic’s Ethical Stance Under Fire

Anthropic has positioned itself as a champion of safety in AI development, which is at the heart of its conflict with the Pentagon. The DoD recently designated Anthropic as a “supply chain risk” due to its refusal to align with military demands. The company’s commitment to ethical AI has become a double-edged sword; while it seeks to cultivate a responsible brand, its dealings with the military have positioned it at a crossroads between profit and principle.

In a recent interview, Sarah Kreps, a technology policy expert and former air force member, highlighted the intricacies of this evolving relationship. “The military’s urgent need for technology sometimes conflicts with the ethical frameworks that tech companies like Anthropic strive to uphold,” she explained. The military’s imperative to act swiftly often clashes with the more cautious approach that companies like Anthropic advocate for.

The Cultural Divide Between Tech and Military

Anthropic’s predicament illustrates the cultural rift between tech firms and military organisations. Historically, military acquisitions take time, often hindered by a labyrinth of regulations and ethical considerations. Kreps emphasised that the technologies developed for civilian use differ significantly from those required for classified military operations. “The challenge is that the military cannot afford to wait for a military-grade version of technology when the commercial variant is already available,” she noted.

This cultural divide is particularly pronounced given Anthropic’s previous collaborations with the Pentagon and companies like Palantir, which have drawn scrutiny for their controversial applications of AI. Kreps remarked that Anthropic’s current dilemma seems to stem from a misalignment between its corporate ethos and its military engagements, particularly regarding mass surveillance and lethal weapons.

Navigating the Labyrinth of National Security

One of the most pressing questions arising from this conflict is the role of private tech companies in national security. Kreps pointed out that the military’s argument hinges on the notion that in matters of national defence, private firms should not be able to impede operational decisions. “If there’s an immediate national security issue, we shouldn’t have to call Dario Amodei for approval,” she stated.

This brings to mind past incidents, such as the FBI’s demand for Apple to unlock the iPhone of a mass shooter. In that case, Apple’s refusal raised significant privacy concerns and sparked debates on the extent of government power over technology. In contrast, once Anthropic’s AI is handed over to the military, the company loses control over how its technology is employed, potentially leading to uses that contradict its ethical guidelines.

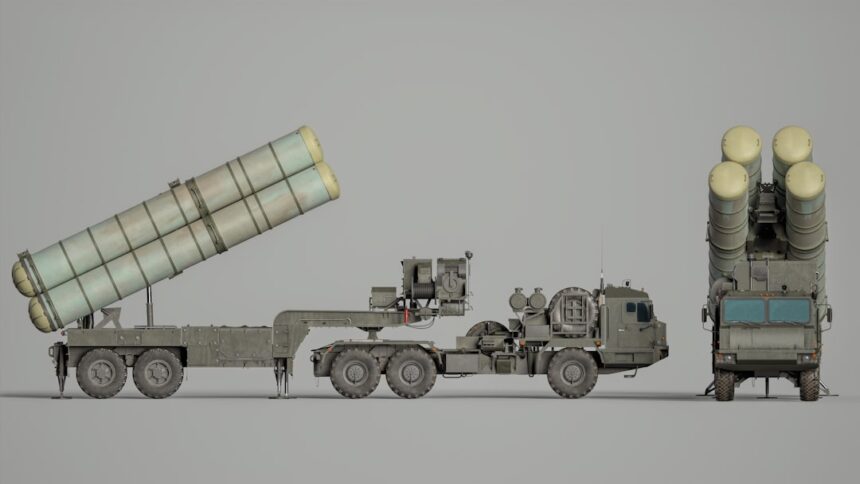

The Future of AI in Warfare

As the debate continues, it’s clear that the implications of AI in military contexts are vast and complex. Kreps noted that while AI can significantly enhance military capabilities—particularly in processing vast amounts of data for intelligence purposes—it also raises ethical questions that cannot be overlooked. “AI excels in pattern recognition, but when it comes to identifying human targets, the stakes are much higher,” she cautioned.

With the technology advancing rapidly, the military’s reliance on AI is likely to grow, making it imperative for companies like Anthropic to navigate this tricky landscape carefully. The potential for misuse in counter-terrorism operations, where distinguishing between combatants and civilians becomes increasingly blurred, highlights the urgent need for clear ethical guidelines.

Why it Matters

The ongoing confrontation between Anthropic and the Pentagon not only showcases the tension between technological innovation and ethical standards but also signifies a pivotal moment for the future of AI in warfare. As the military seeks to harness AI’s capabilities, the need for companies to maintain their ethical commitments becomes paramount. This situation could set precedents for how technology is regulated, deployed, and scrutinised in conflict zones, making it a defining issue for policymakers and tech leaders alike. As we move forward, the stakes are not just about business and technology; they concern the very principles of humanity in warfare.